Helper Functions / Motivations

aurellem ☉

1 Blender Utilities

In blender, any object can be assigned an arbitrary number of

key-value pairs which are called "Custom Properties". These are

accessible in jMonkeyEngine when blender files are imported with the

BlenderLoader. meta-data extracts these properties.

(in-ns 'cortex.sense) (defn meta-data "Get the meta-data for a node created with blender." [blender-node key] (if-let [data (.getUserData blender-node "properties")] ;; this part is to accomodate weird blender properties ;; as well as sensible clojure maps. (.findValue data key) (.getUserData blender-node key)))

Blender uses a different coordinate system than jMonkeyEngine so it is useful to be able to convert between the two. These only come into play when the meta-data of a node refers to a vector in the blender coordinate system.

(defn jme-to-blender "Convert from JME coordinates to Blender coordinates" [#^Vector3f in] (Vector3f. (.getX in) (- (.getZ in)) (.getY in))) (defn blender-to-jme "Convert from Blender coordinates to JME coordinates" [#^Vector3f in] (Vector3f. (.getX in) (.getZ in) (- (.getY in))))

2 Sense Topology

Human beings are three-dimensional objects, and the nerves that transmit data from our various sense organs to our brain are essentially one-dimensional. This leaves up to two dimensions in which our sensory information may flow. For example, imagine your skin: it is a two-dimensional surface around a three-dimensional object (your body). It has discrete touch sensors embedded at various points, and the density of these sensors corresponds to the sensitivity of that region of skin. Each touch sensor connects to a nerve, all of which eventually are bundled together as they travel up the spinal cord to the brain. Intersect the spinal nerves with a guillotining plane and you will see all of the sensory data of the skin revealed in a roughly circular two-dimensional image which is the cross section of the spinal cord. Points on this image that are close together in this circle represent touch sensors that are probably close together on the skin, although there is of course some cutting and rearrangement that has to be done to transfer the complicated surface of the skin onto a two dimensional image.

Most human senses consist of many discrete sensors of various properties distributed along a surface at various densities. For skin, it is Pacinian corpuscles, Meissner's corpuscles, Merkel's disks, and Ruffini's endings, which detect pressure and vibration of various intensities. For ears, it is the stereocilia distributed along the basilar membrane inside the cochlea; each one is sensitive to a slightly different frequency of sound. For eyes, it is rods and cones distributed along the surface of the retina. In each case, we can describe the sense with a surface and a distribution of sensors along that surface.

2.1 UV-maps

Blender and jMonkeyEngine already have support for exactly this sort of data structure because it is used to "skin" models for games. It is called UV-mapping. The three-dimensional surface of a model is cut and smooshed until it fits on a two-dimensional image. You paint whatever you want on that image, and when the three-dimensional shape is rendered in a game the smooshing and cutting is reversed and the image appears on the three-dimensional object.

To make a sense, interpret the UV-image as describing the distribution of that senses sensors. To get different types of sensors, you can either use a different color for each type of sensor, or use multiple UV-maps, each labeled with that sensor type. I generally use a white pixel to mean the presence of a sensor and a black pixel to mean the absence of a sensor, and use one UV-map for each sensor-type within a given sense. The paths to the images are not stored as the actual UV-map of the blender object but are instead referenced in the meta-data of the node.

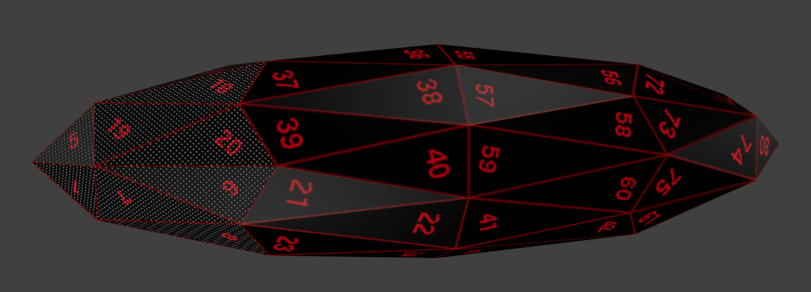

Figure 1: The UV-map for an elongated icososphere. The white dots each represent a touch sensor. They are dense in the regions that describe the tip of the finger, and less dense along the dorsal side of the finger opposite the tip.

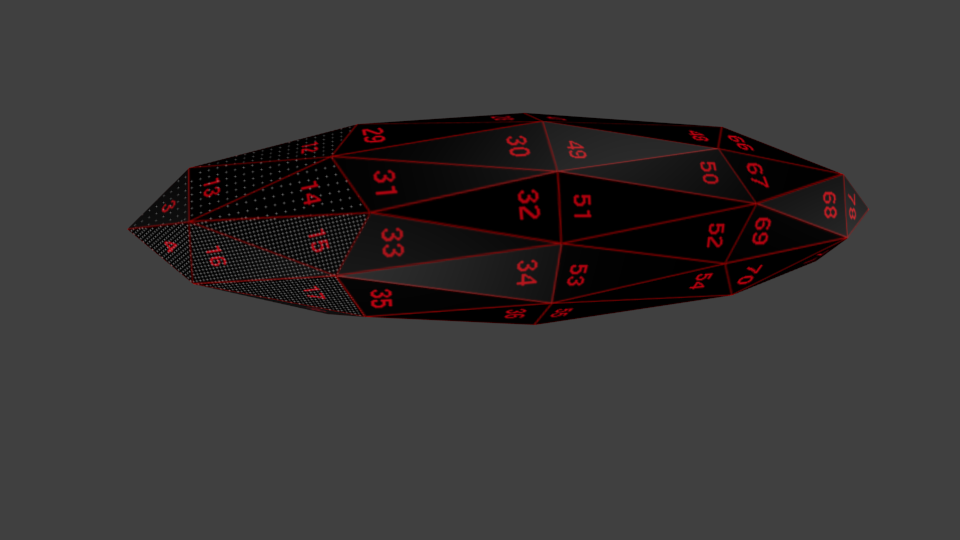

Figure 2: Ventral side of the UV-mapped finger. Notice the density of touch sensors at the tip.

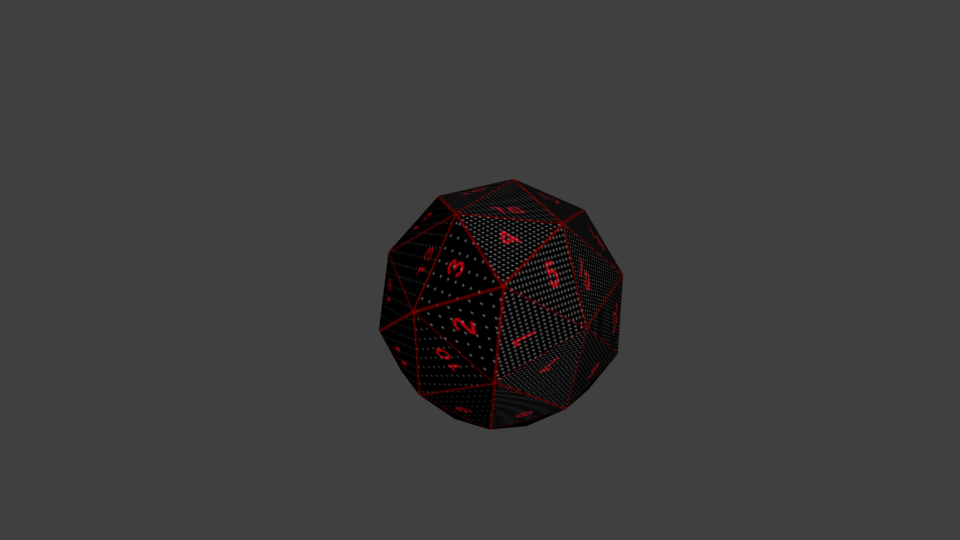

Figure 3: Side view of the UV-mapped finger.

Figure 4: Head on view of the finger. In both the head and side views you can see the divide where the touch-sensors transition from high density to low density.

The following code loads images and gets the locations of the white

pixels so that they can be used to create senses. load-image finds

images using jMonkeyEngine's asset-manager, so the image path is

expected to be relative to the assets directory. Thanks to Dylan

for the beautiful version of filter-pixels.

(defn load-image "Load an image as a BufferedImage using the asset-manager system." [asset-relative-path] (ImageToAwt/convert (.getImage (.loadTexture (asset-manager) asset-relative-path)) false false 0)) (def white 0xFFFFFF) (defn white? [rgb] (= (bit-and white rgb) white)) (defn filter-pixels "List the coordinates of all pixels matching pred, within the bounds provided. If bounds are not specified then the entire image is searched. bounds -> [x0 y0 width height]" {:author "Dylan Holmes"} ([pred #^BufferedImage image] (filter-pixels pred image [0 0 (.getWidth image) (.getHeight image)])) ([pred #^BufferedImage image [x0 y0 width height]] ((fn accumulate [x y matches] (cond (>= y (+ height y0)) matches (>= x (+ width x0)) (recur 0 (inc y) matches) (pred (.getRGB image x y)) (recur (inc x) y (conj matches [x y])) :else (recur (inc x) y matches))) x0 y0 []))) (defn white-coordinates "Coordinates of all the white pixels in a subset of the image." ([#^BufferedImage image bounds] (filter-pixels white? image bounds)) ([#^BufferedImage image] (filter-pixels white? image)))

2.2 Topology

Information from the senses is transmitted to the brain via bundles of

axons, whether it be the optic nerve or the spinal cord. While these

bundles more or less preserve the overall topology of a sense's

two-dimensional surface, they do not preserve the precise euclidean

distances between every sensor. collapse is here to smoosh the

sensors described by a UV-map into a contiguous region that still

preserves the topology of the original sense.

(in-ns 'cortex.sense) (defn average [coll] (/ (reduce + coll) (count coll))) (defn- collapse-1d "One dimensional helper for collapse." [center line] (let [length (count line) num-above (count (filter (partial < center) line)) num-below (- length num-above)] (range (- center num-below) (+ center num-above)))) (defn collapse "Take a sequence of pairs of integers and collapse them into a contiguous bitmap with no \"holes\" or negative entries, as close to the origin [0 0] as the shape permits. The order of the points is preserved. eg. (collapse [[-5 5] [5 5] --> [[0 1] [1 1] [-5 -5] [5 -5]]) --> [0 0] [1 0]] (collapse [[-5 5] [-5 -5] --> [[0 1] [0 0] [ 5 -5] [ 5 5]]) --> [1 0] [1 1]]" [points] (if (empty? points) [] (let [num-points (count points) center (vector (int (average (map first points))) (int (average (map first points)))) flattened (reduce concat (map (fn [column] (map vector (map first column) (collapse-1d (second center) (map second column)))) (partition-by first (sort-by first points)))) squeezed (reduce concat (map (fn [row] (map vector (collapse-1d (first center) (map first row)) (map second row))) (partition-by second (sort-by second flattened)))) relocated (let [min-x (apply min (map first squeezed)) min-y (apply min (map second squeezed))] (map (fn [[x y]] [(- x min-x) (- y min-y)]) squeezed)) point-correspondence (zipmap (sort points) (sort relocated)) original-order (vec (map point-correspondence points))] original-order)))

3 Viewing Sense Data

It's vital to see the sense data to make sure that everything is

behaving as it should. view-sense and its helper, view-image

are here so that each sense can define its own way of turning

sense-data into pictures, while the actual rendering of said pictures

stays in one central place. points->image helps senses generate a

base image onto which they can overlay actual sense data.

(in-ns 'cortex.sense) (defn view-image "Initializes a JPanel on which you may draw a BufferedImage. Returns a function that accepts a BufferedImage and draws it to the JPanel. If given a directory it will save the images as png files starting at 0000000.png and incrementing from there." ([#^File save title] (let [idx (atom -1) image (atom (BufferedImage. 1 1 BufferedImage/TYPE_4BYTE_ABGR)) panel (proxy [JPanel] [] (paint [graphics] (proxy-super paintComponent graphics) (.drawImage graphics @image 0 0 nil))) frame (JFrame. title)] (SwingUtilities/invokeLater (fn [] (doto frame (-> (.getContentPane) (.add panel)) (.pack) (.setLocationRelativeTo nil) (.setResizable true) (.setVisible true)))) (fn [#^BufferedImage i] (reset! image i) (.setSize frame (+ 8 (.getWidth i)) (+ 28 (.getHeight i))) (.repaint panel 0 0 (.getWidth i) (.getHeight i)) (if save (ImageIO/write i "png" (File. save (format "%07d.png" (swap! idx inc)))))))) ([#^File save] (view-image save "Display Image")) ([] (view-image nil))) (defn view-sense "Take a kernel that produces a BufferedImage from some sense data and return a function which takes a list of sense data, uses the kernel to convert to images, and displays those images, each in its own JFrame." [sense-display-kernel] (let [windows (atom [])] (fn this ([data] (this data nil)) ([data save-to] (if (> (count data) (count @windows)) (reset! windows (doall (map (fn [idx] (if save-to (let [dir (File. save-to (str idx))] (.mkdir dir) (view-image dir)) (view-image))) (range (count data)))))) (dorun (map (fn [display datum] (display (sense-display-kernel datum))) @windows data)))))) (defn points->image "Take a collection of points and visualize it as a BufferedImage." [points] (if (empty? points) (BufferedImage. 1 1 BufferedImage/TYPE_BYTE_BINARY) (let [xs (vec (map first points)) ys (vec (map second points)) x0 (apply min xs) y0 (apply min ys) width (- (apply max xs) x0) height (- (apply max ys) y0) image (BufferedImage. (inc width) (inc height) BufferedImage/TYPE_INT_RGB)] (dorun (for [x (range (.getWidth image)) y (range (.getHeight image))] (.setRGB image x y 0xFF0000))) (dorun (for [index (range (count points))] (.setRGB image (- (xs index) x0) (- (ys index) y0) -1))) image))) (defn gray "Create a gray RGB pixel with R, G, and B set to num. num must be between 0 and 255." [num] (+ num (bit-shift-left num 8) (bit-shift-left num 16)))

4 Building a Sense from Nodes

My method for defining senses in blender is the following:

Senses like vision and hearing are localized to a single point and follow a particular object around. For these:

- Create a single top-level empty node whose name is the name of the sense

- Add empty nodes which each contain meta-data relevant to the sense, including a UV-map describing the number/distribution of sensors if applicable.

- Make each empty-node the child of the top-level

node.

sense-nodesbelow generates functions to find these children.

For touch, store the path to the UV-map which describes touch-sensors in the meta-data of the object to which that map applies.

Each sense provides code that analyzes the Node structure of the creature and creates sense-functions. They also modify the Node structure if necessary.

Empty nodes created in blender have no appearance or physical presence

in jMonkeyEngine, but do appear in the scene graph. Empty nodes that

represent a sense which "follows" another geometry (like eyes and

ears) follow the closest physical object. closest-node finds this

closest object given the Creature and a particular empty node.

(defn sense-nodes "For some senses there is a special empty blender node whose children are considered markers for an instance of that sense. This function generates functions to find those children, given the name of the special parent node." [parent-name] (fn [#^Node creature] (if-let [sense-node (.getChild creature parent-name)] (seq (.getChildren sense-node)) (do ;;(println-repl "could not find" parent-name "node") [])))) (defn closest-node "Return the physical node in creature which is closest to the given node." [#^Node creature #^Node empty] (loop [radius (float 0.01)] (let [results (CollisionResults.)] (.collideWith creature (BoundingBox. (.getWorldTranslation empty) radius radius radius) results) (if-let [target (first results)] (.getGeometry target) (recur (float (* 2 radius))))))) (defn world-to-local "Convert the world coordinates into coordinates relative to the object (i.e. local coordinates), taking into account the rotation of object." [#^Spatial object world-coordinate] (.worldToLocal object world-coordinate nil)) (defn local-to-world "Convert the local coordinates into world relative coordinates" [#^Spatial object local-coordinate] (.localToWorld object local-coordinate nil))

4.1 Sense Binding

bind-sense binds either a Camera or a Listener object to any

object so that they will follow that object no matter how it

moves. It is used to create both eyes and ears.

(defn bind-sense "Bind the sense to the Spatial such that it will maintain its current position relative to the Spatial no matter how the spatial moves. 'sense can be either a Camera or Listener object." [#^Spatial obj sense] (let [sense-offset (.subtract (.getLocation sense) (.getWorldTranslation obj)) initial-sense-rotation (Quaternion. (.getRotation sense)) base-anti-rotation (.inverse (.getWorldRotation obj))] (.addControl obj (proxy [AbstractControl] [] (controlUpdate [tpf] (let [total-rotation (.mult base-anti-rotation (.getWorldRotation obj))] (.setLocation sense (.add (.mult total-rotation sense-offset) (.getWorldTranslation obj))) (.setRotation sense (.mult total-rotation initial-sense-rotation)))) (controlRender [_ _])))))

Here is some example code which shows how a camera bound to a blue box

with bind-sense moves as the box is buffeted by white cannonballs.

(in-ns 'cortex.test.sense) (defn test-bind-sense "Show a camera that stays in the same relative position to a blue cube." ([] (test-bind-sense false)) ([record?] (let [eye-pos (Vector3f. 0 30 0) rock (box 1 1 1 :color ColorRGBA/Blue :position (Vector3f. 0 10 0) :mass 30) table (box 3 1 10 :color ColorRGBA/Gray :mass 0 :position (Vector3f. 0 -3 0))] (world (nodify [rock table]) standard-debug-controls (fn init [world] (let [cam (doto (.clone (.getCamera world)) (.setLocation eye-pos) (.lookAt Vector3f/ZERO Vector3f/UNIT_X))] (bind-sense rock cam) (.setTimer world (RatchetTimer. 60)) (if record? (Capture/captureVideo world (File. "/home/r/proj/cortex/render/bind-sense0"))) (add-camera! world cam (comp (view-image (if record? (File. "/home/r/proj/cortex/render/bind-sense1"))) BufferedImage!)) (add-camera! world (.getCamera world) no-op))) no-op))))

YouTube

With this, eyes are easy — you just bind the camera closer to the desired object, and set it to look outward instead of inward as it does in the video.

(nb : the video was created with the following commands)

4.1.1 Combine Frames with ImageMagick

(ns cortex.video.magick (:import java.io.File) (:use clojure.java.shell)) (defn combine-images [] (let [idx (atom -1) left (rest (sort (file-seq (File. "/home/r/proj/cortex/render/bind-sense0/")))) right (rest (sort (file-seq (File. "/home/r/proj/cortex/render/bind-sense1/")))) sub (rest (sort (file-seq (File. "/home/r/proj/cortex/render/bind-senseB/")))) sub* (concat sub (repeat 1000 (last sub)))] (dorun (map (fn [im-1 im-2 sub] (sh "convert" (.getCanonicalPath im-1) (.getCanonicalPath im-2) "+append" (.getCanonicalPath sub) "-append" (.getCanonicalPath (File. "/home/r/proj/cortex/render/bind-sense/" (format "%07d.png" (swap! idx inc)))))) left right sub*))))

4.1.2 Encode Frames with ffmpeg

cd /home/r/proj/cortex/render/

ffmpeg -r 30 -i bind-sense/%07d.png -b:v 9000k -vcodec libtheora bind-sense.ogg

5 Headers

(ns cortex.sense "Here are functions useful in the construction of two or more sensors/effectors." {:author "Robert McIntyre"} (:use (cortex world util)) (:import ij.process.ImageProcessor) (:import jme3tools.converters.ImageToAwt) (:import java.awt.image.BufferedImage) (:import com.jme3.collision.CollisionResults) (:import com.jme3.bounding.BoundingBox) (:import (com.jme3.scene Node Spatial)) (:import com.jme3.scene.control.AbstractControl) (:import (com.jme3.math Quaternion Vector3f)) (:import javax.imageio.ImageIO) (:import java.io.File) (:import (javax.swing JPanel JFrame SwingUtilities)))

(ns cortex.test.sense (:use (cortex world util sense vision)) (:import java.io.File (com.jme3.math Vector3f ColorRGBA) (com.aurellem.capture RatchetTimer Capture)))

6 Source Listing

7 Next

Now that some of the preliminaries are out of the way, in the next post I'll create a simulated body.